The AI Act wants people to know when they are interacting with AI generated or manipulated content such as deepfakes, but how is this achieved in practice? A new (currently draft) Code of Practice on Transparency of AI-Generated Content (the ‘Code’), which organisations can voluntarily sign up to, could help.

What does the Code cover?

The Code is in two parts.

- Section 1 is for providers of AI systems (including general purpose AI systems) and covers the rules under the Act that they must follow on the marking and detection of AI-generated and manipulated content. This includes ensuring outputs of AI systems (i.e. any audio, image, video or text content) are marked in a machine-readable format, detectable as artificially generated or manipulated and that the technical solutions used to do this are (as far as technically feasible) effective, interoperable, robust and reliable.

- Section 2 is for deployers of AI systems (a wider category, capturing organisations that use AI technologies provided by other companies). It covers the deployer rules on: (i) labelling deepfakes (i.e. image, audio or video which resembles existing people, objects, places, events etc. and falsely appears authentic – and the first version of the Code discusses the use of a common taxonomy around this); and (ii) certain AI generated and manipulated text (i.e. publications informing the public on matters of public interest and which haven’t undergone a human review).

Each section of the Code contains a number of commitments, measures and (in some cases) sub-measures to illustrate how to comply with these AI Act obligations. For example:

- AI Act obligation: providers of AI systems have an obligation under Article 50(2) of the Act to mark the outputs of generative AI systems in a machine-readable manner.

- Code Commitment: To fulfil this obligation, the Code commits signatories to implementing a multi-layered approach of active marking techniques with regard to the text, image, video or audio content (or any combination of them) generated or manipulated by the relevant AI system. Signatories recognise this is necessary as no single marking technique is currently sufficient.

- Measures and sub-measures: One sub-measure to help fulfil this commitment includes adding information about the provenance of the content and a signature of the generative AI system into the metadata, where content is generated or exported in a data format which supports this. Other examples include interwoven watermarking and fingerprinting or logging.

- Code Commitment: To fulfil this obligation, the Code commits signatories to implementing a multi-layered approach of active marking techniques with regard to the text, image, video or audio content (or any combination of them) generated or manipulated by the relevant AI system. Signatories recognise this is necessary as no single marking technique is currently sufficient.

The Code also sets out governance and documentation expectations designed to demonstrate compliance, and has wider implications across the AI supply chain. This is particularly the case for signatories that are also model providers where (despite the fact that the Act imposes separate system-level obligations) the Code imposes model-level obligations on those signatories. For example, to support downstream compliance, signatories providing open‑weight models are expected to implement structural marking techniques encoded in the weights during model training. Likewise, where a signatory also provides generative AI models, it must implement machine-readable marking techniques for the content generated or manipulated by their models, before the model is placed on the market.

Status of the Code

The code, which is referenced in the AI Act, is very much in draft form at present with more detail expected in future iterations. It is being drafted in an ‘inclusive process’ and its content is being refined by two working groups (one focussed on the provider section, the other on the deployer section). Both working groups are also reviewing cross-cutting issues such as promoting co-operation across the AI supply chain and the interaction between the transparency obligations imposed on providers and those imposed on deployers.

Once the Code is finalised, it is intended to be a “guiding document for demonstrating compliance” with the relevant transparency obligations. As with the equivalent code for the Act’s GPAI rules (see our blog) organisations can voluntarily sign up to it, but do so in the knowledge that: (i) alternative routes to compliance may exist; and (ii) adherence to the Code does not guarantee compliance.

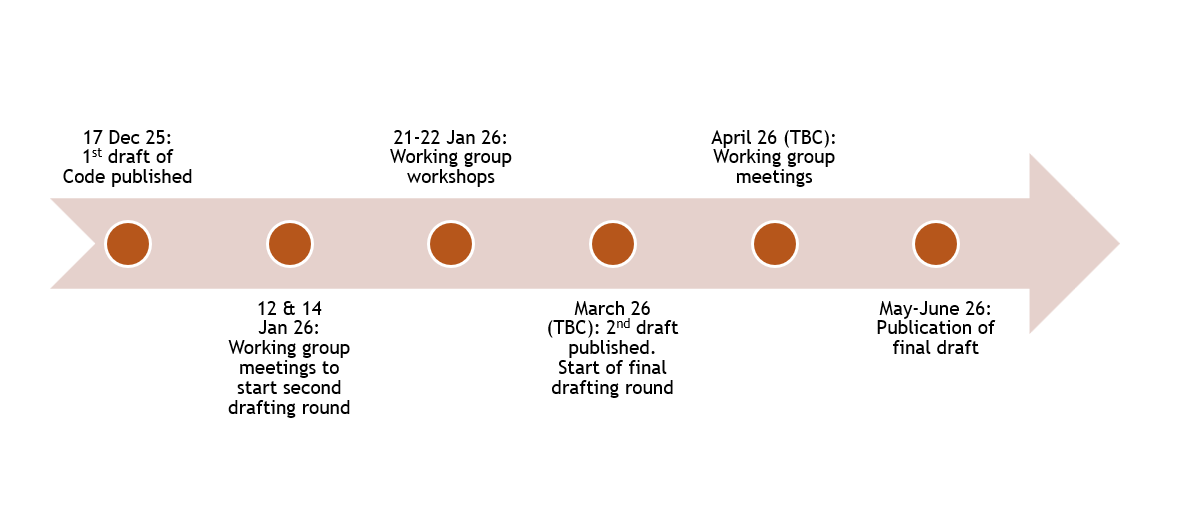

Timings of the Code

The transparency rules apply from this summer (2nd August), and the aim is to have the Code agreed in advance of this. The digital omnibus may give providers of AI systems put on the market before this date an additional 6 month grace period to comply with their obligations around the marking and detectability of AI-generated content (meaning they must comply by 2 February 2027 - see our blog). However, this still leaves timings relatively tight, given the final version of the Code is currently expected this May/June (see the full timeline below for more information). Providers and deployers of relevant AI systems should therefore start engaging with the draft Code now, and we will be tracking (and sharing) its changes as it further develops.

Update: The second version of the Code was published on 5 March. It streamlines and simplifies the code in some places, promotes the use of open standards for AI content marking (providing examples of a potential EU icon that could be used for this) and makes certain elements of the Code which go beyond the AI Act voluntary. More details on the changes, and the contents of the second draft are available here.

In other transparency news…

The EU is not the only country looking to tackle risks around deepfakes. As well as introducing a new law criminalising the creation of sexually explicit deepfakes, the UK Government is working with Microsoft and other technology companies to implement a ‘deepfake detection evaluation framework’ to assess detection tools and technologies. Once the testing framework is established, it will “be used to set clear expectations for industries on deepfake detection standards”, according to the Government.

Code Timeline

Note: We will be tracking the development of this Code. Given that its content is likely to change in future drafts (something we saw with the equivalent GPAI code) we will provide a more detailed analysis closer to its finalisation.

/Passle/5badda5844de890788b571ce/SearchServiceImages/2026-05-27-09-55-28-575-6a16bf90d069d073a6d0db05.jpg)

/Passle/5badda5844de890788b571ce/SearchServiceImages/2026-05-18-14-15-51-209-6a0b1f17695b2a226d55ac28.jpg)

/Passle/5badda5844de890788b571ce/SearchServiceImages/2026-05-19-18-32-57-996-6a0cacd9c376865c8b74a11e.jpg)

/Passle/5badda5844de890788b571ce/SearchServiceImages/2026-05-19-16-40-23-595-6a0c9277b535ef3db0960936.jpg)